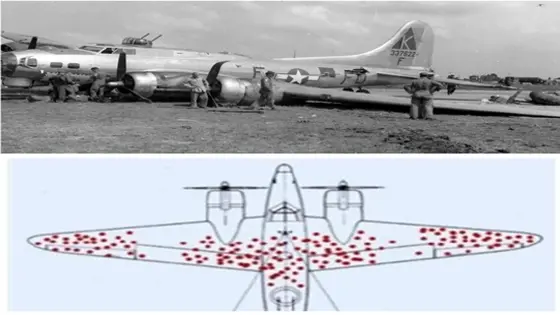

During World War II, statisticians from Abraham Wald’s team analyzed all the planes returning from battles and highlighted the areas with the most bullet holes, as shown in the image illustrating this post. The goal was to identify where the aircraft structure should be reinforced most efficiently: not too much to make the plane too heavy, difficult to maneuver, and consuming more fuel, but not too little to leave it vulnerable.

The distribution of bullet holes was not uniform: the red marks in the figure represent the areas that were hit most frequently. Based on this data, where should they reinforce?

An automatic response, without much thought, would be “where it receives the most shots.”

It makes sense: if they are shooting at the wings, it’s better to reinforce them to avoid damage. What were Abraham Wald’s recommendations?

- Do not reinforce the most hit areas;

- Armor the areas with no marks, such as the engines, for example.

The planes that received more shots in the highlighted areas were able to fly back. But those that were hit in the areas without marks didn’t even return.

No one was analyzing the bullet holes on the planes that didn’t make it back.

This case illustrates the Survival Bias, which is quite common when analyzing data to test a hypothesis: if we use the only available information source as sufficient, we will ignore a significant portion of the causes of these problems.

Sometimes, the most important answer lies in the missing information. When analyzing a database, it is essential to observe both what is visible and what is not immediately apparent.

What the data does not answer is as important as what it does answer.

Since the amount of missing information is always infinitely greater than the available information, it is necessary to ask the right questions.

Best regards and have a great week!